The numbers don't lie. The measurement does.

That is not a technology problem. Every major platform works. The models are capable. The infrastructure exists.

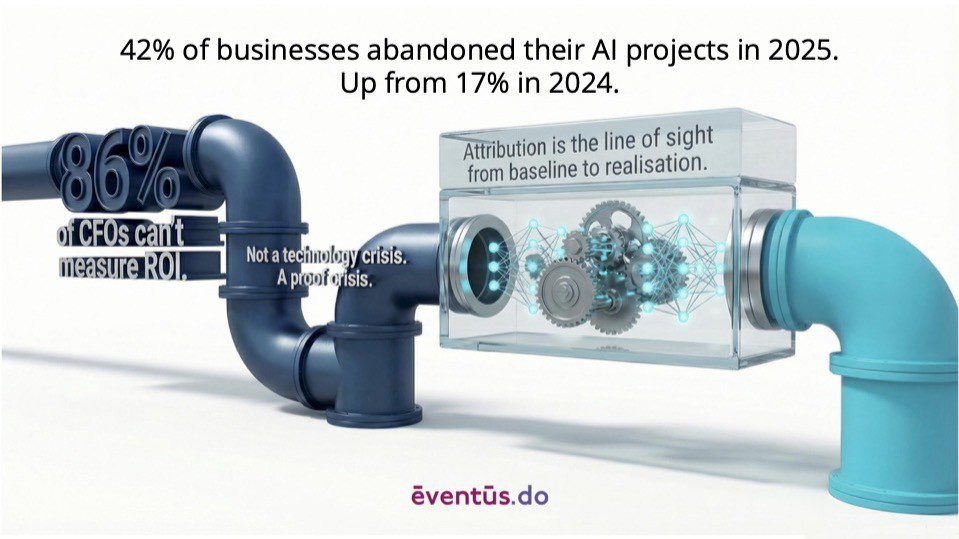

It is a proof problem.

86% of CFOs cannot measure ROI from their AI investments. MIT found that 61% of AI projects were approved without any post-deployment measurement framework in place. Billions committed on projections. No line of sight to whether they delivered. No mechanism to find out.

The AI didn’t fail. The evidence architecture did.

What the 10x Businesses Do Differently

First: the baseline.

Not an estimate. Not a survey. Real data from the systems already running, CRM, ERP, service desk, finance. The actual outcomes being achieved today, measured before anything changes. Most businesses have years of this data. Almost none of them use it as a starting point.

Second: the commitment.

Not “we’re deploying AI.” Which process. Which metric. Which direction. What the improvement target is. A specific, defensible digital statement of what success looks like, line items identified, quantification made, built before deployment, ready to optimise.

Third: the loop.

What actually happened versus what was predicted. Not annually. Continuously. Adjust. Optimise. Refine. The loop is what turns an AI deployment into an AI programme. Without it, you’re running an experiment you cannot learn from.

Most businesses skip straight to the target. No baseline to measure from. No commitment to what the journey should deliver. No capability to optimise toward the results that matter.

The Cost of Skipping the Baseline

Twelve months later, performance has improved. Or it appears to have. But you cannot say how much of that improvement the AI drove, because you do not know where you started with sufficient precision. You cannot separate the AI’s contribution from the sales team’s contribution, the market tailwind, the operational change you made in Q2, or the competitor who exited the market in Q3.

So you make a claim. The claim is contested. The conversation becomes political. The CFO remains unconvinced. The renewal is at risk.

This is not a hypothetical. It is the standard outcome for organisations that commit without measuring.

The fix is not complicated. A baseline takes weeks, not months. The data is already there. What is missing is the discipline to capture it before deployment rather than scrambling to reconstruct it afterwards.

What to Do This Quarter

Three questions worth asking now:

1. Do you know your pre-AI baseline, the starting position, a set of figures through the service you are set to improve? Not approximately. Specifically. With dates, with data sources.

2. Have you committed to what improvement looks like? Not a vague target. A specific, time-bound, measurable outcome that the initiative is accountable for.

3. Do you have a review cadence that compares actuals to predictions? Not an annual review. A loop tight enough to allow course correction before the investment is wasted.

If the answer to any of those is no, the 42% statistic is a warning, not a coincidence.

The Proof Crisis Is Solvable

Did it work?

The organisations that will extract lasting value from AI are not those with the most sophisticated models. They are those with the clearest line of sight from where they started to what they achieved, and the methodology to prove it.

Attribution is not a reporting function. It is a commercial capability. And right now, it is the most important one most AI programmes are missing.

What if you could prove the business impact?